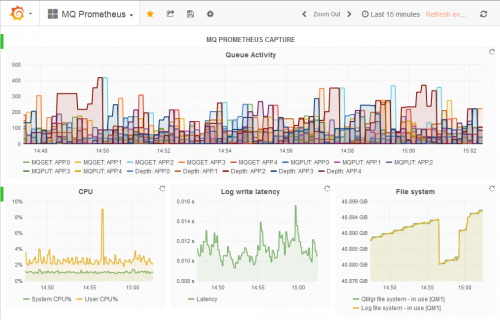

The MQ metric exporters are a set of Go programs that deliver queue manager statistics and status to databases such as Prometheus and Influx. They have recently been updated, giving more consistent function and a much easier configuration. This post will explore and explain these changes.

For an introduction to these exporters, see some of my earlier posts in this blog.

What are the exporters

There are currently 6 exporters available in the same GitHub repository:

- Prometheus

- InfluxDB

- OpenTSDB

- Cloudwatch

- JSON

- Collectd

They all have a similar pattern, subscribing to metrics published regularly by queue managers, querying the status of various objects, and then getting the numbers into a database or output to a suitable file format.

For historic and market-share reasons, the Prometheus exporter has always been the first component that got any updates. When I added new function, I often only made it available in that exporter and perhaps the JSON one.

But recently there were a few issues raised about the other exporters, essentially showing the fact that they had not had as much maintenance and that people were still trying to use them.

Problems

I knew that the underlying MQ Go packages needed to be reworked so that they could be used with the new modules interfaces and that would result in changes (even if only mechanical ones) to all of the exporters.

I was also a bit frustrated that adding new function needed very similar but not identical code to be added to all of the exporters

Finally I was finding the startup scripts that set the parameters of which objects to monitor were getting unwieldy. Every new object type required the scripts to get bigger, additional options were being asked for, and the command-line parameters were getting confusing.

Solutions

Configuration with YAML

There are lots of configuration parameters that need to be given to the exporters via the command line. Changing the parameters usually needed the startup script to be changed. I decided it would be helpful to move all the configuration into a separate file. While the existing command parameters can continue to be used, it’s now easier to just use a single -f parameter that points at an external configuration file. This is an example of the format:

global: useObjectStatus: true useResetQStats: false logLevel: INFO metaprefix: "" pollInterval: 30s rediscoverInterval: 1h tzOffset: 0h connection: queueManager: QM1 clientConnection: false replyQueue: SYSTEM.DEFAULT.MODEL.QUEUE

objects: queues: - APP.* - "!SYSTEM.*" - "!AMQ.*" queueSubscriptionSelector: - PUT - GET channels: - SYSTEM.* - TO.* topics: subscriptions:

prometheus: port: 9157 metricsPath: "/metrics" namespace: ibmmq

The file is split into blocks, the first ones being common to all of the exporters and the final section being specific to the backend database or output route. The field names are similar to the command-line flags but I changed them a little for consistency. Versions of this file are included for all of the exporters.

One particular formatting change is to use lists or arrays instead of a single comma-separated string for things such as the patterns of queues to monitor.

Using Modules

Once I had a version of the ibmmq and mqmetric packages available in a module, it was fairly easy to make the changes in the exporter source code. Mostly it just required insertion of “v5” in a number of import statements.

Resetting the dependency chain has also allowed updates to all of the other packages used by the exporters, such as the Prometheus client interface.

One choice I had to make with the update was whether or not to use vendoring. The compiler module support is meant to make it unnecessary, automatically downloading correct levels of the prereq packages. But I decided to continue with it so all of the dependencies have been copied into the vendor directory in the repository ready to use immediately. You can of course force a redownload of what is under that directory by using go mod vendor.

The buildMonitors.sh script that is included in the repository has been updated to take account of the new structure. When you run the script it will create and execute a container where all of the exporters are compiled and copied back into your local directory. So you do not need to set up your own build environment. The main changes that were needed were to make sure the script sets the current directory to the root of the repository (where the vendor directory lives) before compiling instead of being somewhere higher up in the tree. Working in the directory with the go.mod file gives a lot of indications to the compiler on how to proceed.

Common Function

All of the exporters have been brought to a common level of function. They can report on the same object types including the z/OS pageset/bufferpool status. This did mean that metric names from some of the older exporters needed to change to include an object type though the Prometheus and JSON packages were already doing that.

While there still needs to be exporter-specific code on exactly how the metrics are emitted, there is now more common code especially in the configuration processing.

Other enhancements

I’ve also added some other features at the same time, based on suggestions raised by users in the Issues section of the repository or in direct email.

Optional reduction in number of subscriptions

To gather the metrics for queues, four subscriptions are usually made for each queue. You can see the equivalent options by running amqsrua -m QM1 -c STATQ where you can select 4 options. For many situations, the only really interesting metrics are the number of Puts and Gets. The queueSubscriptionSelector option can be used to select which subscriptions are actually made – setting it to “PUT,GET” as shown in the above YAML file will halve the number of subscriptions, reducing resource usage in the queue manager and the number of messages that need to be processed. For large sets of queues this can be very helpful.

Meaningful error codes on exit

A couple of error situations now have identifying exit codes so they can be dealt with in any launching script. In particular, trying to connect to a standby instance of a queue manager will return a value of 30. It’s a shame that we can’t use real MQRC numbers but commands can only return 0-255. So I might have a launch script that loops trying to start the exporter while the return code is 30, and exits on any other error code.

Summary

I hope that these changes make the tools easier to use. Please continue to give feedback through the usual routes so I can see what further enhancements might be useful.

This post was last updated on November 27th, 2021 at 02:59 pm