The sample monitoring packages here use a variety of techniques to collect metrics from the queue manager. The most recent release (v5.7.0) adds a further alternative approach.

Continue reading “MQ Monitoring: using queue manager STATISTICS events”Tag: prometheus

Native HA CRR metrics & mqperfck

There will, no doubt, be full discussions elsewhere of the new MQ Native HA Cross-region Replication feature. But one aspect of it seemed particularly relevant to other articles on this site: monitoring the CRR metrics.

The MQ 9.4.2 release also includes another tool, mqperfck, that introduces some additional metrics. And I’ll talk about how that potentially affects other monitoring tools.

This post was last updated on March 1st, 2025 at 09:55 am

MQ Metrics with OpenTelemetry

As I promised in a recent article, I am coming back to the OpenTelemetry topic. This time, it’s going to be about another pillar of the observability requirements – integrating MQ’s metrics with OpenTelemetry.

Continue reading “MQ Metrics with OpenTelemetry”This post was last updated on March 12th, 2024 at 07:27 am

Monitoring MQ availability

One of the focus areas for new development in MQ in recent years has been in the area of High Availability and Disaster Recovery. Technologies such as RDQM and Native HA, and automatically managed logfiles, give a range of possibilities for ensuring your messaging systems continue reliably. Alongside the core function, there are also metrics and status information to show more about what is going on. And so the latest updates to the open source monitor programs add collection of some of these recently-added values. This should simplify monitoring MQ availability.

Continue reading “Monitoring MQ availability”This post was last updated on February 22nd, 2023 at 10:30 am

Durable subscriptions to minimise object handle use

Collection of the metrics that the queue manager publishes requires that each monitored queue has at least one associated subscription. This post describes an interesting option where collection programs use durable subscriptions to minimise object handle use when running MQ monitoring. It reduces the requirements for configuring the MAXHANDS attribute on the queue manager. It’s also a nice demonstration of how subscriptions could be used in any application.

This post was last updated on June 30th, 2022 at 07:23 am

New features with the MQ Go metric collectors

The mq-metric-samples collectors that send IBM MQ metrics and status data to a range of databases, ready to be viewed in Grafana, have just been enhanced to collect additional information. The Prometheus collector has also been extended so that it can continue providing limited status even when the queue manager is down.

The new metrics have all been suggested by users of the package either directly or via issues raised in the GitHub repository. Many previous articles on here show more about the collectors.

The InfluxDB collector is also refreshed for a new version of the database.

Continue reading “New features with the MQ Go metric collectors”This post was last updated on November 27th, 2021 at 02:59 pm

Updates for MQ metric exporters

The MQ metric exporters are a set of Go programs that deliver queue manager statistics and status to databases such as Prometheus and Influx. They have recently been updated, giving more consistent function and a much easier configuration. This post will explore and explain these changes.

For an introduction to these exporters, see some of my earlier posts in this blog.

Continue reading “Updates for MQ metric exporters”

This post was last updated on November 27th, 2021 at 02:59 pm

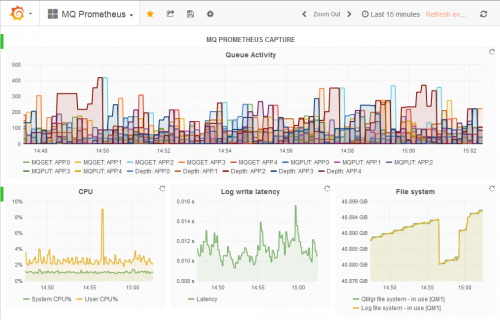

Using Prometheus to monitor MQ channel status

In 2016 I wrote about how MQ’s resource statistics can work with a number of time-series databases, including Prometheus. This permits monitoring using the same tools that many customers use for monitoring other products. It allows easy creation of dashboards using tools such as Grafana.

Since that original version, we’ve made a number of enhancements to the packages that underpin that monitoring capability. For example, more database options were added; a JSON formatter appeared. One notable change was when we split the monitoring agent programs into a separate GitHub repository, making it easier to work with just the pieces you needed.

And now, I’ve released some changes that allow Prometheus and generic JSON processors to see some key channel status information. In particular, a Grafana dashboard can easily highlight channels that are not running.

Continue reading “Using Prometheus to monitor MQ channel status”

This post was last updated on November 25th, 2019 at 09:53 am

Adding resource statistics to your applications

MQ V9 added resource monitoring statistics that you can subscribe to. In this post I’m going to show how you can generate similar statistics from your own applications using the same model. For example, you may want to track how many successful and how many failed messages are being processed.

See how to add statistics generation to your applications

This post was last updated on November 19th, 2019 at 07:41 pm

IBM MQ – Further integration with open-source monitors

An earlier blog entry showed how to integrate MQ with the Prometheus database, capturing statistics that can then be shown in a Grafana dashboard. In this article, I’ll show how that initial work has been extended to work with more databases and collection tools.

The latest updates allow MQ to write directly to InfluxDB and OpenTSDB databases, and also to provide data to collectd.

Continue reading “IBM MQ – Further integration with open-source monitors”

This post was last updated on November 27th, 2021 at 03:00 pm