Recently, we have been creating and publishing a lot of material to help educate people about MQ. You can find that here.

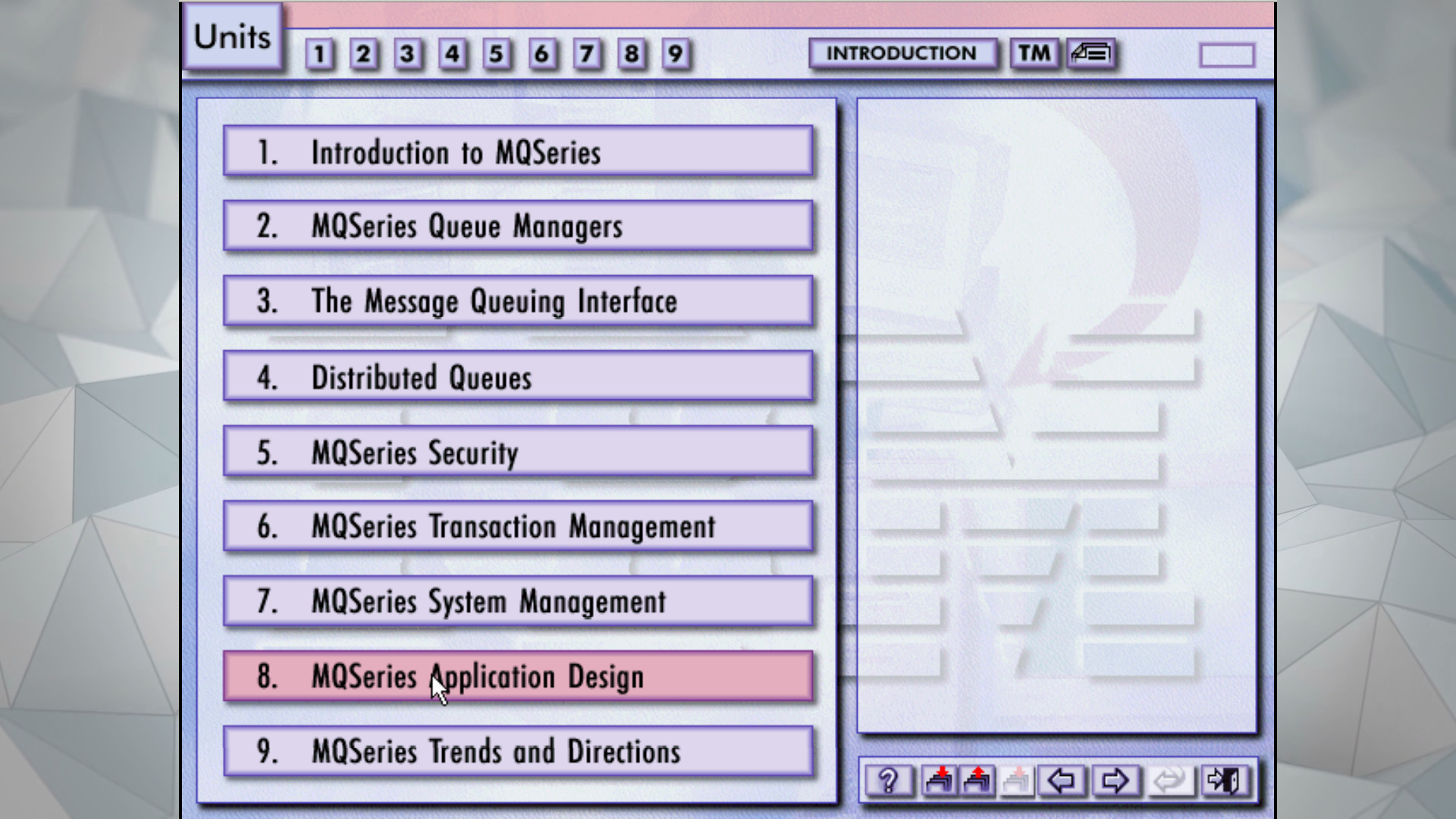

But how might you have learned about MQ in its early days? While hunting through archives for something that I was, in the end, unable to find, I did come across one piece of education that was created over 20 years ago.

You can now see what it was like in this video.

Just a few thoughts that I had:

- While the style may be different, and details vary, a lot of the content is recognisably the same

- It’s nice to see the MQ Dancers logo return, even as a tiny icon

- In 1996, the course ended by saying that MQ was “long-term”. Yes, they got that right.

I hope you enjoy it.

This post was last updated on November 20th, 2019 at 09:14 pm