The sample monitoring packages here use a variety of techniques to collect metrics from the queue manager. The most recent release (v5.7.0) adds a further alternative approach.

Continue reading “MQ Monitoring: using queue manager STATISTICS events”Tag: grafana

MQ Metrics with OpenTelemetry

As I promised in a recent article, I am coming back to the OpenTelemetry topic. This time, it’s going to be about another pillar of the observability requirements – integrating MQ’s metrics with OpenTelemetry.

Continue reading “MQ Metrics with OpenTelemetry”This post was last updated on March 12th, 2024 at 07:27 am

Monitoring MQ availability

One of the focus areas for new development in MQ in recent years has been in the area of High Availability and Disaster Recovery. Technologies such as RDQM and Native HA, and automatically managed logfiles, give a range of possibilities for ensuring your messaging systems continue reliably. Alongside the core function, there are also metrics and status information to show more about what is going on. And so the latest updates to the open source monitor programs add collection of some of these recently-added values. This should simplify monitoring MQ availability.

Continue reading “Monitoring MQ availability”This post was last updated on February 22nd, 2023 at 10:30 am

Durable subscriptions to minimise object handle use

Collection of the metrics that the queue manager publishes requires that each monitored queue has at least one associated subscription. This post describes an interesting option where collection programs use durable subscriptions to minimise object handle use when running MQ monitoring. It reduces the requirements for configuring the MAXHANDS attribute on the queue manager. It’s also a nice demonstration of how subscriptions could be used in any application.

This post was last updated on June 30th, 2022 at 07:23 am

New features with the MQ Go metric collectors

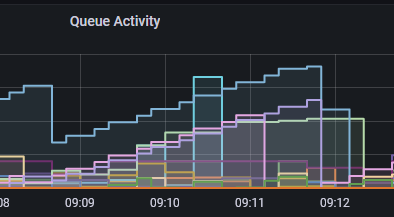

The mq-metric-samples collectors that send IBM MQ metrics and status data to a range of databases, ready to be viewed in Grafana, have just been enhanced to collect additional information. The Prometheus collector has also been extended so that it can continue providing limited status even when the queue manager is down.

The new metrics have all been suggested by users of the package either directly or via issues raised in the GitHub repository. Many previous articles on here show more about the collectors.

The InfluxDB collector is also refreshed for a new version of the database.

Continue reading “New features with the MQ Go metric collectors”This post was last updated on November 27th, 2021 at 02:59 pm

Using Loki and Grafana with MQ logs

It is odd how often similar questions come at the same time from unrelated places. Because of the projects and articles I’ve written about visualising MQ’s metrics in Grafana, I recently had a couple of people asking about using that same front-end but able to work with log-file data. Specifically would it be possible to use Loki and Grafana together with MQ. My immediate reaction was that I didn’t know what Loki was in this context, but clearly they couldn’t be asking about a Norse god or Marvel character. Instead after a very short search and a few minutes reading, I guessed that it ought to be possible to use it. Which was my reply.

But of course, I’m not likely to leave it there when I learn about a new tool. Especially when I have a reasonable starting point like a working local Grafana setup. And so I have done some very quick experiments to prove that the approach really can work. On the way I’ll also take a brief digression into using logrotate.

Continue reading “Using Loki and Grafana with MQ logs”This post was last updated on February 9th, 2021 at 02:37 pm

Viewing MQ configurations with Grafana

This post shows how you can use Grafana to selectively view information about your MQ configuration. Which may sound a little odd. Grafana’s strength is primarily to show statistics and metrics in pretty graphs. So why would we want to use it to look at queue definitions? The answer is that you usually would not! There are many more appropriate tools for displaying and updating the queue manager configuration – even the MQ Explorer or MQ Console are better. But there may be times when a limited set of information may be desirable, so you can link from a graph to a different view, within the same tool.

But another important aspect that I hope this shows is the power of a common data format. The techniques I’ll show here could be used to combine a variety of different tools, and perhaps this will give you some ideas.

Continue reading “Viewing MQ configurations with Grafana”

This post was last updated on November 25th, 2019 at 02:18 pm

Using Prometheus to monitor MQ channel status

In 2016 I wrote about how MQ’s resource statistics can work with a number of time-series databases, including Prometheus. This permits monitoring using the same tools that many customers use for monitoring other products. It allows easy creation of dashboards using tools such as Grafana.

Since that original version, we’ve made a number of enhancements to the packages that underpin that monitoring capability. For example, more database options were added; a JSON formatter appeared. One notable change was when we split the monitoring agent programs into a separate GitHub repository, making it easier to work with just the pieces you needed.

And now, I’ve released some changes that allow Prometheus and generic JSON processors to see some key channel status information. In particular, a Grafana dashboard can easily highlight channels that are not running.

Continue reading “Using Prometheus to monitor MQ channel status”

This post was last updated on November 25th, 2019 at 09:53 am

Adding resource statistics to your applications

MQ V9 added resource monitoring statistics that you can subscribe to. In this post I’m going to show how you can generate similar statistics from your own applications using the same model. For example, you may want to track how many successful and how many failed messages are being processed.

See how to add statistics generation to your applications

This post was last updated on November 19th, 2019 at 07:41 pm

IBM MQ – Using AWS CloudWatch to monitor queue managers

In this final(?) blog entry of a series, I’m going to show how you can monitor MQ queue managers using Amazon’s CloudWatch service.

Continue reading “IBM MQ – Using AWS CloudWatch to monitor queue managers”

This post was last updated on November 23rd, 2019 at 12:28 am